By: Iain Cowie

A popular topic of discussion recently has been the emergence of Artificial Intelligence (AI) systems that have the ability to hold conversation with humans and carry out increasingly complex tasks. One of such systems garnering considerable attention is OpenAI’s chatbot platform, ChatGPT. If you’re unfamiliar, a chatbot is a form of automated language software capable of communicating online with a live human in real time via text. A common use for chatbots in the past has been in customer service. You may have interacted with one of these when your online order was a week late or when you wanted to banish your freeloading ex-roommate from your Netflix account. You may have even ordered a pizza or received medical advice from a chatbot.

What is ChatGPT?

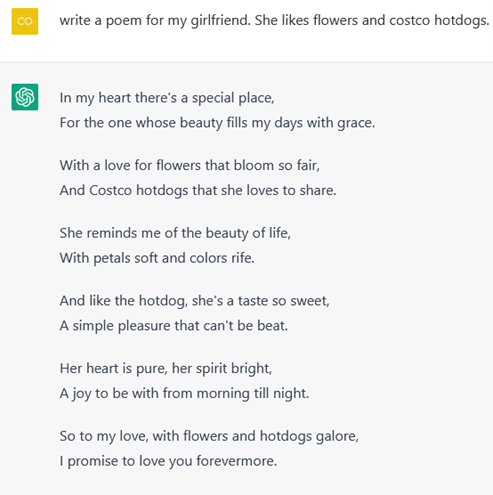

ChatGPT sets itself apart as the most sophisticated chatbot that we’ve seen so far, using AI to complete complex tasks like coding and writing reports, essays, or emails in a matter of seconds. It can seemingly come up with answers to anything you could think to ask it. For example, you could even ask it to write a new episode of your favourite TV show, or ask it to write a poem tailored perfectly to your significant other’s interests.

How does it work?

Chat GPT runs on a language model called a “Generative Pre-trained Transformer” (GPT). What this means is that contrary to the belief that it searches the internet for information, ChatGPT is actually pre-trained to generate responses based on information that it has already learned through training and testing that included reinforcement learning and human feedback.

What are the concerns?

ChatGPT has captured the imaginations of many, with opinions varying from it being this generation’s revolutionary technological achievement to being a worrisome first step toward a dystopian future. Concerns have been raised in academia over students using ChatGPT to complete assignments and write essays, raising questions about academic dishonesty and learning outcomes for students. Further concerns have been raised over AI replacing or hindering human intelligence and making many jobs obsolete.

People are understandably uneasy with the unknown factors around AI and its potential impact on society. Although these concerns are valid, they also serve us as a background reminder of our own values when using new technologies. It is an important distinction that technology like ChatGPT can be used ethically and responsibly as a tool to assist and enhance the efficiency of the work that we do, rather than replacing it. I’ve learned this first-hand as a community researcher at RDI, using AI as a helpful and time-saving tool that has assisted in generating content that can be useful to rural communities.

My Experience in Using AI

In my role at RDI over the past few months I’ve been working on a project that involves creating profiles for several rural and Indigenous communities across Manitoba, Nunavut, Ontario, and Australia. A community profile is typically a written description that encapsulates the story of a community through its demographics, history, and local economic activities. A community profile is a useful tool for community development as it provides a contextual background of a community that can help to identify the unique assets and challenges present in a community as well as help set priorities and guide its path forward.

The methods implemented for this have included populating tables with demographic information, descriptions and observations for each community drawn from sources such as Stats Canada, community and local government websites, and various other sources. Researching and populating these tables was a process that took around 45 minutes to an hour and a half per community. Typically, the next step would be to interpret the data and write out a community profile based on the information gathered. This step of the process would likely take another 45 minutes to an hour and a half per community. Assuming this, if you were tasked with writing profiles for 10 communities using the data from the tables, this job would likely take up a full day’s work, at the very least.

By using an AI tool to assist with this work, I was able to generate profiles from the table data in less than 3 hours, with four options to choose from for each community (the AI will not write the exact same profile twice). It should be noted that ChatGPT was not the tool used for this, but rather another feature offered by OpenAI called “Playground.” By simply copying and pasting the tables and asking the AI to create a community profile based on the data, it was able to generate a well-written profile in a matter of seconds. So far, I’ve compiled four options to choose from for 14 communities in less than four hours of work. Some of this time included playing around with which commands yield the best quality of results, and then developing a procedure based on this. The methods of following this procedure and using pre-gathered data ensures that results, quality, and style of writing are both consistent and require minimal fact checking.

Although concerns about the emergence of AI are certainly understandable, I expect the use AI writing assistants to become more commonplace in the years to come. Afterall, utilization of new technologies that improve efficiency and enhance our work is not an unprecedented concept. My experience working with AI has shown me that, if used correctly and responsibly, AI can serve as an exceedingly useful and effective tool for creating content that has value for researchers and ultimately, for communities we work with.

References: